The State of AI in Cybersecurity 2026

February 23, 2026

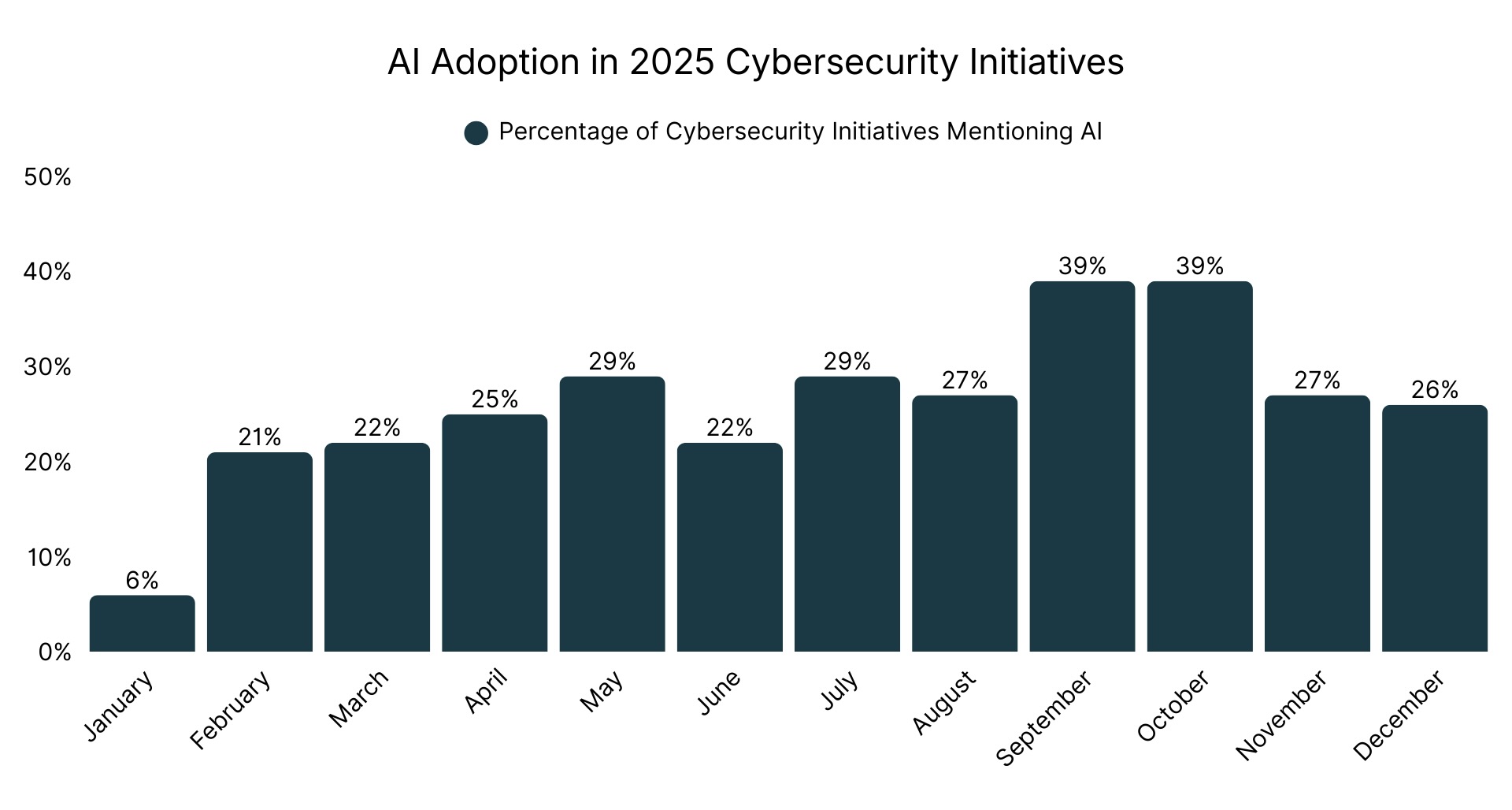

Security leaders (“Sages”) launched 1,300 cybersecurity projects (“initiatives”) on Sagetap in 2025. By Q4, nearly one in three involved AI. This report analyzes 264 verified H2 2025 initiatives from CISOs and security executives who documented real buying decisions through the Sagetap platform.

Why this matters: Traditional analyst reports predict where markets might go. Sagetap’s report shows what security leaders actually evaluated and deployed between July and December 2025, captured through real buying processes, peer validation, and documented outcomes — including emerging vendors and innovative startups that may not yet appear on traditional analyst radars.

This report exists because of our Sages, the security leaders who documented their initiatives, shared their evaluation criteria, and contributed their expertise to build intelligence that benefits the entire community.

The report combines documented H2 2025 initiative data with Sagetap's strategic analysis and industry best practices, representing our interpretation of patterns as well as forward-looking assessments. Statistical claims are sourced from verified initiative data, and strategic guidance sections (“The Reality for 2026”) provide Sagetap's recommendations based on observed patterns and industry expertise.

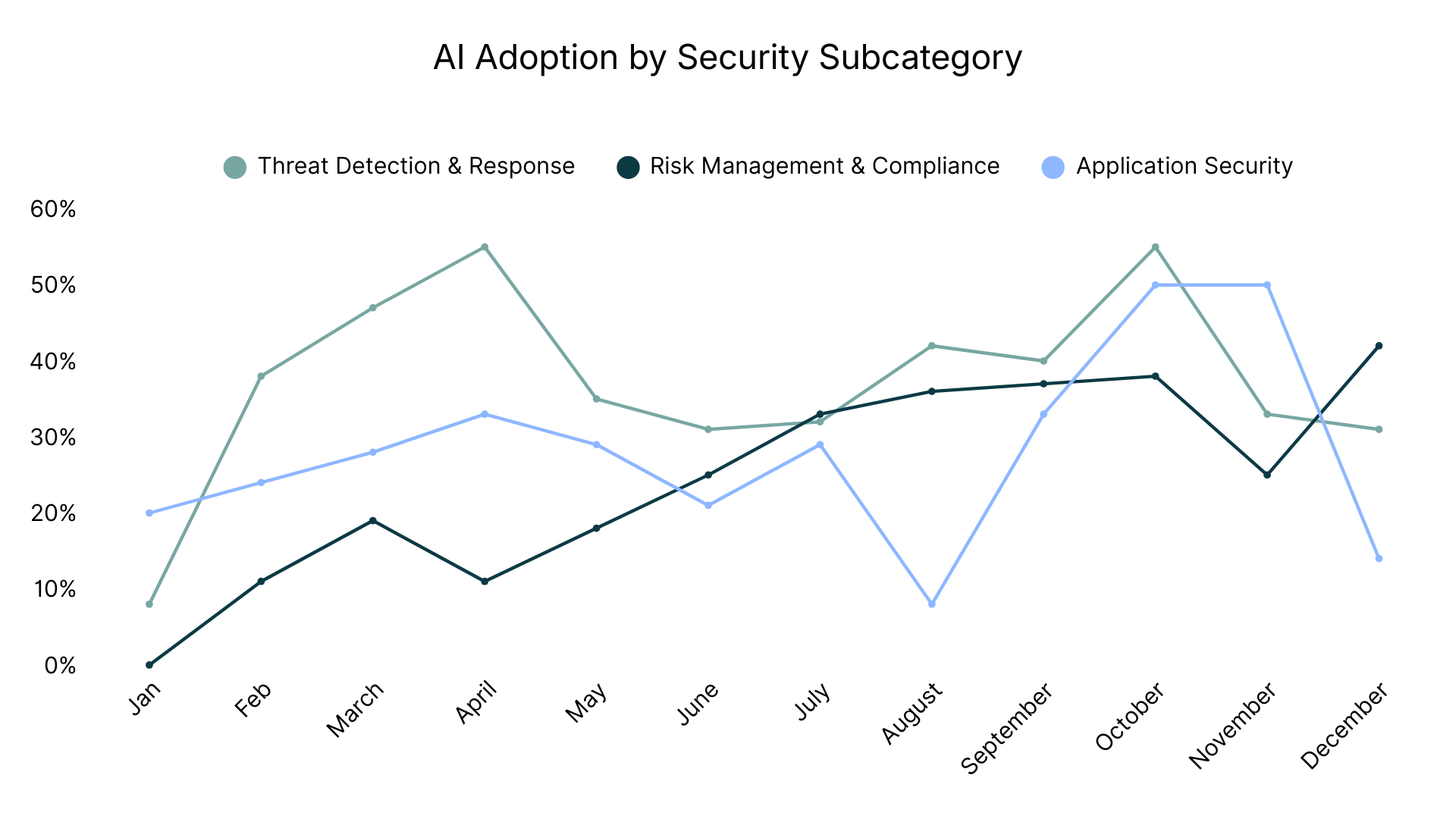

Our focus: This analysis concentrates on the three security categories where AI adoption accelerated fastest in H2 2025: Threat Detection & Response (125 initiatives, 40% AI adoption), Risk Management & Compliance (92 initiatives, 36% AI adoption), and Application Security (47 initiatives, 23% AI adoption). These categories represent where security executives planned to invest most heavily in AI during the second half of 2025.

Executive Summary: Three Crisis Points Driving AI Acceleration

AI adoption in cybersecurity initiatives accelerated dramatically in H2 2025, driven by three converging crises that made AI deployment essential rather than experimental.

SOC analyst shortage crisis: Manual triage couldn't keep pace with alert volumes. Extended hiring cycles combined with persistent retention challenges made AI the only viable scaling strategy. Multiple initiatives explicitly mentioned seeking AI "without expanding headcount," signaling that hiring has become too slow to meet operational needs.

Board-level risk quantification mandate: Executives rejected qualitative risk assessments and demanded quantified breach costs in dollar terms. Sages deploying AI risk quantification platforms sought faster budget approval by presenting financial risk figures rather than traditional “red/yellow/green” risk dashboards. The shift from "are we secure?" to "how much would a breach cost us?" created urgent demand for platforms that translate technical risk into financial language boards understand.

AI-generated code security gap: Developers using Copilot and similar AI coding assistants shipped code faster than security teams could validate it manually. Traditional security tools couldn't detect vulnerabilities introduced by AI-generated code, creating a gap that only AI-powered security tools could close at the required speed.

H2 2025 Key Findings:

- Threat Detection & Response: 40% AI adoption (peaked at 55% in October)

- Risk Management & Compliance: Steady growth throughout 2025, reaching 42% in December

- Application Security: 23% AI adoption with strategic pilots before enterprise commitments

- Multiple documented initiatives explicitly mentioned plans to replace Splunk SIEM

- TPRM replacements (OneTrust, BitSight) were driven by "boxed in" complaints

- Q1 2026 renewal deadlines creating mass SIEM migration wave

Threat Detection & Response: Leading AI Adoption at 40%

Among the three security categories with highest AI adoption in H2 2025, Threat Detection & Response leads with 40% of the 125 initiatives involving AI capabilities. The October peak at 55% suggests this category is approaching majority adoption faster than other security domains.

Multiple Splunk Replacements Signal Enterprise SIEM Market Shift

Sagetap's H2 2025 data captured numerous initiatives where Sages explicitly stated they were replacing Splunk Enterprise Security. Long-time Splunk customers are migrating to what they describe as "AI-native data pipelines" and "next-generation SIEM" platforms. The driver goes beyond cost alone: these teams fundamentally disagree with ingestion-based pricing models that penalize organizations for collecting security data. The more logs you collect, the higher your costs, creating perverse incentives to reduce security visibility to control expenses.

One team's assessment: "We have been a long time customer of Splunk but recognize it is no longer the right SIEM for how we operate. Combine this with the significant costs incurred based on ingest and/or query based access within the system we know there must be a better way with a next generation SIEM. Strategically we want to replace this before renewal in Q1 2026."

Another team's objective was straightforward: "Our objective is to replace SPLUNK SIEM with a cost effective and easier to use SIEM product."

A third initiative focused on architectural transformation: "We’re replacing Splunk with an AI-native data pipeline to reduce ingestion costs, normalize multi-source security logs, and retain full detection fidelity while improving efficiency, scalability, and visibility across our hybrid cloud security operations."

Several of the initiatives explicitly mentioned "Q1 2026 renewal" as their deadline, creating urgency for migration decisions.

The Strategic Reality for 2026

These documented Splunk replacements represent the first wave of enterprises rejecting ingestion-based pricing as cloud architectures make that economic model fundamentally unsustainable. The uncomfortable question you'll face at the board level: how do you justify security costs growing faster than the business when peer organizations are achieving significant SIEM cost reductions while maintaining or improving detection coverage?

Based on initiative patterns and cost language, Sagetap believes organizations completing these migrations in 2026 enter 2027 with cost structures that scale with their security posture and platforms built for AI-native operations. Those extending current contracts face another year watching competitors operate more efficiently with better economics, then explaining to boards and finance teams why they're locked into a pricing model the market is actively rejecting.

SIEM Migrations: Change Management Is the Bottleneck to ROI

Change management challenges can lead to extended dual-platform operation when analyst retraining timelines exceed expectations, a risk several initiatives explicitly planned to address. This suggests that for CISOs, change management (not tooling) is the critical path to realizing ROI on SIEM transitions.

Initiatives commonly described a gap between technical deployment and analyst adoption. While some teams completed SIEM deployments relatively quickly, others reported delays due to analyst retraining or change management needs. Without proactive investment in analyst enablement, teams often found themselves maintaining legacy platforms longer than budgeted, compounding operational overhead and delaying the strategic benefits of migration. Several initiatives referenced planning for internal training and adoption support, though few detailed specific success patterns or training approaches.

The Execution Reality for 2026

Recognize that SIEM migration failure happens in the human layer, not the technical layer. Organizations that budgeted only for licenses and professional services may end up running extended dual-platform operations if analyst adoption lags, creating operational costs that can exceed initial implementation budgets.

Your true budget is the fully-loaded cost, including productivity loss during extended dual operations when your security team operates at reduced effectiveness. If a vendor quotes $500K in licenses, for example, budget significantly higher for total implementation. Initiatives that treated migration purely as a technical exercise (without dedicated change management resourcing) often incurred unplanned costs maintaining legacy platforms in parallel, eroding project ROI.

SOC Automation Budgeted as Talent Acquisition

Multiple SOC automation initiatives included the phrase "without expanding headcount," signaling a fundamental shift in how Sages budget and justify AI investments. These initiatives resemble talent acquisition projects — but instead of hiring new analysts, teams are justifying AI spend as a headcount alternative. That changes the stakeholder dynamics and approval flows. Hiring SOC analysts remains challenging, with extended hiring cycles that don't match urgent security needs. Teams deploying AI triage are filling positions they can't hire for.

One Sage (also replacing Splunk) explained their situation: "Our in-house SOC relies on Splunk for triage, but rising alert overload has slowed investigations and increased analyst fatigue. I am seeking an AI-driven replacement that streamlines Tier 1 triage, reduces false positives, and enhances response times without expanding headcount."

Another initiative sought to "reduce alert fatigue and backlog by automatically triaging with transparent context" while moving "away from the 'black box' nature of our current MDR" to gain "better control and transparency over our security posture." This language reveals a preference shift: teams want force multiplication without losing visibility into how decisions get made.

Teams emphasized allowing time for analysts to build trust in automation. This adoption curve matters for project planning — technical deployment may complete quickly, but analyst acceptance requires dedicated change management investment. Sages also stressed explainability: AI systems must articulate their reasoning to satisfy audit requirements and build analyst confidence in automated decisions.

The Organizational Structure Shift for 2026

Positioning SOC automation as a headcount solution rather than a tooling request reframes the conversation with HR and finance, often unlocking unallocated budget intended for roles that remain unfilled due to hiring constraints. Those Tier 1 positions you can't fill are allocated budget sitting unused while your team drowns in alerts.

But solving today's triage crisis creates tomorrow's talent problem: you'll likely need AI SOC engineers commanding significant salary premiums over traditional SOC analyst roles with talent even harder to find. The winning move is building that capability internally now through upskilling senior analysts while demand for traditional Tier 1 positions continues to evolve.

Position yourself ahead of that structural shift or explain to your board why your team structure looks increasingly outdated compared to peers operating more efficiently with different talent models.

"Agentic AI" Language Signals Autonomous Execution Capability

H2 initiatives introduced terminology that didn't exist in security conversations six months prior: "agentic AI for SOC operations" and "LLM-based AI to automate low-level playbooks." This represents a fundamental capability evolution beyond AI-assisted tools that merely provide recommendations. Sages want platforms that autonomously execute playbooks rather than copilots that suggest next steps and wait for human confirmation.

The distinction matters for both capability and liability: AI-augmented means AI suggests actions and humans decide whether to execute them; agentic AI systems in production raise questions of operational boundary-setting, rollback accountability, and SOC role evolution. One initiative sought "solutions that use LLM-based AI to automate low level playbooks and processes for our SOC. Ideal solutions can work in a best-of-breed environment and a variety of tools the SOC uses to support its operations."

A key evaluation question is: “What happens when it gets it wrong?" Teams need clear answers about failure modes, rollback procedures, and accountability when autonomous AI makes incorrect decisions. Explainability remains non-negotiable even for autonomous systems, perhaps especially for autonomous systems given the higher stakes and audit requirements.

The Liability Question for 2026

The agentic AI conversation is fundamentally about liability transfer and risk tolerance. When AI suggests and you approve, you own the decision. When AI executes autonomously, accountability shifts in ways most organizations haven't fully considered. The more strategic framing is where the boundaries should be drawn today, and what controls must be in place to expand them responsibly over time.

Start shadow mode for 30-60 days where AI recommends without executing, expand gradually to autonomous execution for low-risk playbooks, and maintain rollback capabilities throughout. Agentic AI is becoming a competitive differentiator for SOC efficiency.

Will you have spent 2026 building institutional knowledge about safe autonomous deployment, or starting from zero while competitors operate mature autonomous operations handling routine playbooks without human intervention?

New AI-Enabled Attack Categories Force Defensive AI Adoption

H2 initiatives tracked threat categories that barely existed a year ago. Sages needed tools for "detecting and responding to narrative-driven risks, synthetic identities, and disinformation campaigns." Financial services teams deployed solutions to "enhance call center security by leveraging AI-driven real-time detection and intervention against social engineering attacks, including deepfakes and vishing."

The Defensive Gap Widening in 2026

The AI threat landscape fully arrived in H2 2025, and many security programs remain structurally unprepared. Cross-platform narrative attacks, deepfake vishing, and AI-generated polymorphic malware represent threats manual processes fundamentally cannot address at required speed and scale.

For financial services and call centers specifically, implementing AI-powered voice authentication in the near term is a necessary control before deepfake vishing becomes a widespread attack vector that traditional authentication cannot detect.

The broader reality: if attackers use AI and you defend with manual processes, you're fighting an asymmetric battle where the performance gap widens every quarter.

Top Threat Detection & Response Vendors Sages Evaluated in H2 2025

Based on documented H2 2025 initiatives, Sages most frequently considered these TDR platforms:

Risk Management & Compliance: Fastest-Growing AI Category

Risk Management & Compliance showed the fastest AI adoption growth trajectory in H2 2025, averaging 36% of the 92 initiatives and climbing to 42% in December. This category represents the second-highest AI adoption among the three focus areas.

Boards Now Require Quantified Breach Costs, Not Qualitative Dashboards

Boards rejected "red/yellow/green" risk dashboards and demanded quantified breach costs in dollar terms during H2 2025. Traditional risk reporting relied on qualitative severity ratings and compliance dashboard metrics. Boards determined these assessments were insufficient for making resource allocation decisions. The shift from "are we secure?" to "quantify the financial impact of a potential breach" created demand for AI platforms that model breach scenarios and translate technical risk into dollar figures executives use for business decisions.

One cybersecurity executive explained the board pressure: "Given that our team is small, the ability to present clear, financial risk figures to top management is essential to support both our strategic decision-making and future growth initiatives."

AI platforms became essential because they continuously update risk models based on changing conditions. Manual approaches using spreadsheets provide static snapshots that become outdated within weeks. AI provides real-time, board-ready intelligence that updates as attack surfaces, threats, and business context change. Sages consistently emphasized the need to translate cyber risk into financial terms to improve executive understanding and strengthen board-level discussions, moving beyond traditional “red/yellow/green” dashboards. The CFO becomes an advocate when security teams speak the language of financial risk.

Successful risk quantification approaches often begin by defining the executive presentation format before selecting tools, ensuring platforms generate metrics finance teams actually use.

The Cultural Transformation Required for 2026

The board mandate for quantified risk isn't about better reporting, it's about fundamentally repositioning security from cost center to strategic risk function. The CFO needs cyber risk in the same financial language as market risk: dollar impact, probability distributions, risk-adjusted returns on investments.

The sophisticated play is engaging your CFO early to co-develop the quantification framework before selecting platforms, because the metric that matters is the one finance trusts enough to use in actual resource allocation decisions. Build your framework around business impact categories your CFO already tracks — revenue impact, regulatory penalties, customer retention costs, and operational disruption — not security-centric metrics boards don't connect to outcomes.

If budget doesn't support dedicated AI platforms, build internal models using historical incident data and industry breach cost research. Imperfect quantification that executives can use for decisions beats perfect qualitative assessments that don't inform resource allocation.

Organizations presenting quantified risk to boards early in 2026 will change how their security function is funded and perceived, while those still presenting red/yellow/green will explain why they can't speak the language of business risk.

Two TPRM Platform Replacements Driven by Rigid Risk Scoring

Sagetap's H2 data captured two initiatives explicitly seeking to replace third-party risk management platforms. The recurring complaint in both cases was being "boxed in" by vendor-defined risk scoring methodologies that don't match organizational risk tolerance or business priorities.

The core problem is that vendor-defined risk scoring doesn't match how these organizations actually assess and prioritize third-party risk. Sages want customizable AI platforms they can tune to their specific context, separate from one-size-fits-all frameworks that apply generic risk models designed for broad market appeal rather than organizational nuance.

One OneTrust replacement initiative explained: "Replace OneTrust with a platform that offers real-time AI-driven risk insights, continuous monitoring, and improved automation. We are boxed in with OneTrust's risk scoring methodology and would like to tie it to our firm's [risk model]."

One BitSight replacement initiative sought a "more advanced, AI-driven third-party risk management platform that provides continuous, data-driven insights" beyond what their current platform offered.

Traditional TPRM relied on quarterly vendor surveys, which is a manual, slow process that becomes outdated immediately upon completion and creates significant burden on vendor management teams chasing responses. Teams sought "continuous, external privacy-posture intelligence to monitor top vendors" without requiring vendors to complete lengthy questionnaires.

AI platforms monitor vendor security through observable external signals: security certificates, breach disclosures, domain configurations, third-party security ratings. AI analyzes these signals and scores risk automatically, eliminating vendor survey burden while providing fresher, more accurate data.

The Methodology Ownership Question for 2026

The "boxed in" complaint reveals who owns the risk methodology in your organization (your team or your vendor). Generic scoring optimized for market breadth can't match nuanced tolerance developed through your business model and regulatory environment. TPRM selection is really methodology selection: are you buying your vendor's risk framework or a platform you can configure to yours?

Test during evaluations with specific scenarios: "Our organization considers data residency more critical than certifications for European vendors. Can your platform weight that?" Vendors with genuine customization demonstrate immediately; rigid frameworks deflect to "best practices" that don't reflect your reality. OneTrust and similar vendors likely face significant customer evaluation and potential churn in 2026.

AI Governance Driven by Contract Requirements, Not Compliance Aspirations

AI governance initiatives were driven by contractual obligations with immediate legal consequences. Teams face contract violations if they can't prove client data isn't flowing to public LLMs.

One Sage leader: "Currently we have very little to no visibility of GenAI tools being used in the business. This poses a significant risk as some of our clients will not allow the use of GenAI."

EU AI Act enforcement begins 2026. NIST and ISO 27001 frameworks appeared frequently in H2 initiatives.

The Contract Violation Reality for 2026

AI governance is contract compliance for current obligations you're likely already violating through shadow AI. Client contracts prohibiting GenAI represent active legal exposure compounding with every employee query to ChatGPT containing sensitive data. Gain visibility immediately, implement DLP controls promptly, and create acceptable use policies enabling productivity while maintaining compliance.

The sophisticated approach is building approved AI environments with data controls, then training employees on approved tools rather than blocking all AI and watching workarounds. Allocate several days of compliance review time in Q1 for contract review and tool discovery. If you discover violations, remediate immediately and notify clients proactively rather than waiting for their auditors to discover violations and question your program's credibility.

Compliance Automation Platforms Eliminate Manual Evidence Collection

Audit burden grows while security teams don't. Manual evidence collection scales linearly with control count. Teams demanded platforms that generate auditor-ready evidence automatically, map controls to multiple frameworks simultaneously, and provide real-time compliance status.

One SOC 2 initiative: "This is a large SOC2 across our whole enterprise as we are moving into the software SaaS space. There is going to be a lot of evidence to gather so we are looking to add something that supports automation of evidence collection."

The Scaling Math for 2026

Manual collection becomes liability as controls increase and frameworks proliferate. Manual evidence collection costs scale linearly, so if your team spends 200 hours quarterly, the annual labor cost alone justifies automation platform investment. Single platforms mapping across multiple frameworks (SOC 2, ISO 27001, NIST CSF, HIPAA) deliver more value than separate tools because compliance teams can't scale linearly with framework growth.

Boards are increasingly asking "How are you automating compliance for AI systems?" as organizations prepare for 2027 audits. Position yourself ahead of that question.

Top Risk Management & Compliance Vendors Sages Evaluated in H2 2025

Based on Sages’ H2 2025 initiatives, they most often considered these risk management platforms:

Application Security: Strategic Pilots Before Enterprise Commitments

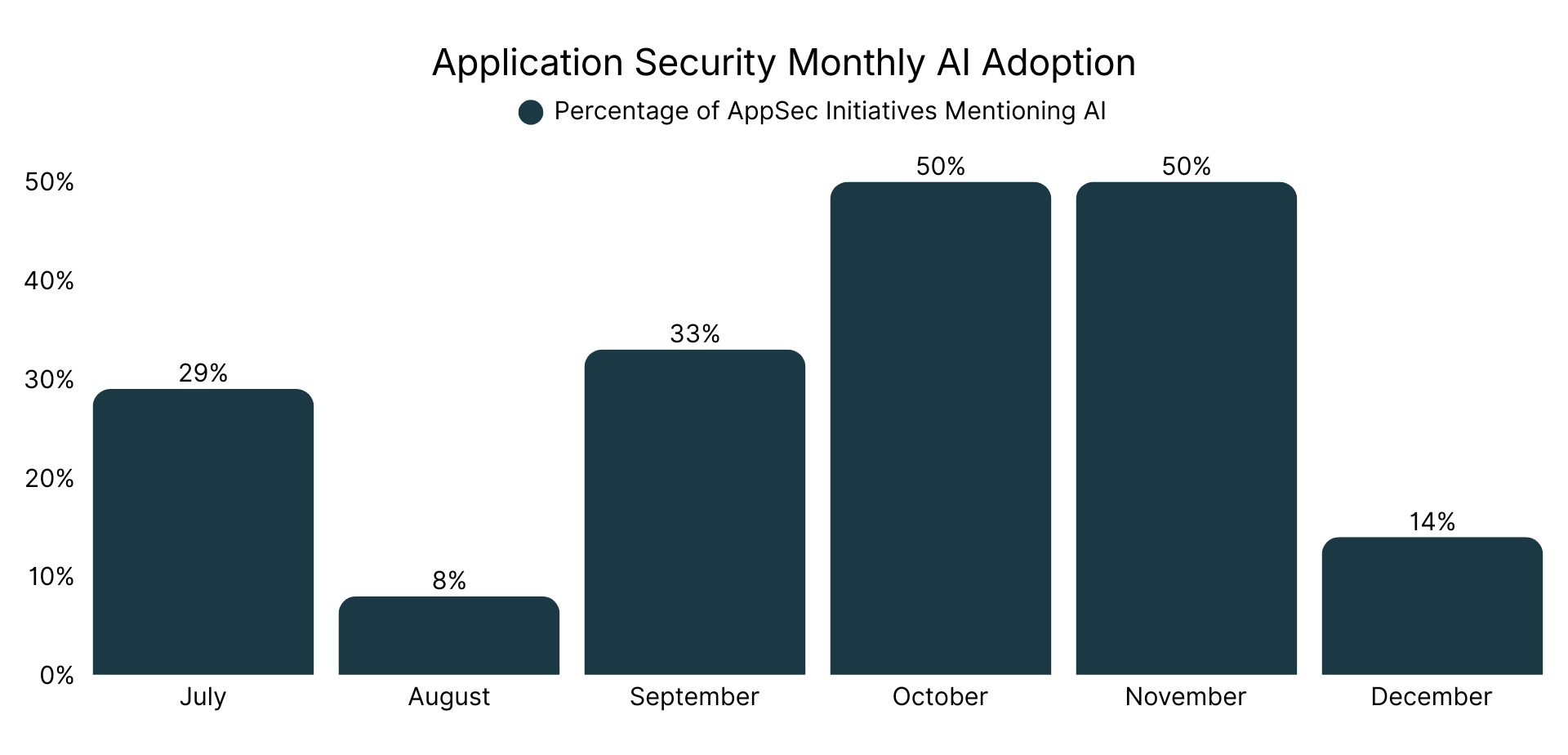

Application Security shows 23% AI adoption among the 47 initiatives in H2 2025, with monthly variation reflecting the experimental nature of AI deployment in this category.

The Vendor Consolidation Timing Strategy for 2026

The pilot approach is sophisticated vendor viability assessment in an unconsolidated market. Running pilots on 10-20% of codebase through H1 before $200K+ enterprise licensing validates tool effectiveness while watching which vendors survive Q4 2026 consolidation. Market fragmentation means Q1-Q2 commitments carry vendor viability risk: The market shows signs of potential consolidation, with uncertain vendor viability creating risk for early enterprise commitments.

Migrating AppSec AI tools mid-deployment is more disruptive than SIEM migration because they're embedded in developer workflow. Make enterprise decisions in H2 after consolidation clarifies sustainable business models versus acquisition targets running out of runway.

AI Coding Assistants Introduce Hidden Supply Chain Vulnerabilities

Developers using AI coding assistants introduce vulnerable dependencies that traditional security tools miss entirely. Developers using Copilot, ChatGPT, and similar AI assistants introduce dependencies buried three layers deep in dependency trees that traditional SCA tools never scan. Sages cited concern over AI-generated code introducing open-source dependencies that traditional SCA tools often overlook, especially transitive dependencies buried several layers deep in dependency trees.

One initiative explained their concern: "Enhance our software supply chain risk posture, with an emphasis on open-source dependencies introduced through AI-generated code."

AI-powered security tools become necessary to maintain pace with AI-accelerated development cycles. This creates an AI arms race: AI speeds development through code generation, forcing security to deploy AI just to keep up with the volume and complexity AI introduces into the codebase.

The Systematic Blind Spot for 2026

The supply chain gap from AI coding assistants is an active blind spot if developers use Copilot or ChatGPT for code generation. Traditional SCA scanning manifest files miss transitive dependencies three layers deep that AI suggestions introduce, meaning AI-generated code can introduce hidden vulnerabilities that legacy tools aren’t always equipped to catch, creating risk blind spots in organizations that haven’t adapted their SCA workflows.

Audit your SCA tool now. Does it scan full dependency trees or just direct dependencies? Implement policy requiring developers to flag AI-assisted commits, run weekly dependency audits on AI-assisted code for early warning before production. Train developers on supply chain implications; budget 4-6 hours in Q1, because most don't realize Copilot suggestions can introduce vulnerable dependencies.

The arms race intensifies: developers increasingly rely on AI, meaning security must deploy AI-powered SCA to maintain coverage. Address this gap proactively or spend later quarters explaining why you're discovering supply chain vulnerabilities in production that should have been caught in development.

LLM Security Testing Requires New Tools and New Roles

Some H2 2025 initiatives addressed securing AI models embedded in applications — testing for prompt injection, jailbreaks, data leakage, and agentic manipulation.

Note: These LLM security initiatives represent an intersection between Application Security and AI Security and Governance, addressing the unique challenges of securing AI systems within software products.

Emerging testing practices include contextual jailbreak testing, semantic injection fuzzing, embedding toxicity scans, and adversarial prompt chaining simulations. Red teams require upskilling or partnering with specialized LLM security firms to execute quarterly assessments aligned with CI/CD pipelines.

The Talent and Capability Gap for 2026

For products embedding LLMs, implement testing immediately. Prompt injection, jailbreaks, data leakage, and agentic manipulation aren't future risks but current attack vectors your traditional program doesn't test. If you can't hire full-time given budget constraints, contract for quarterly LLM security assessments while building internal capability.

If your products contain LLMs and you haven't implemented LLM-specific testing, you have systematic blind spot competitors will eventually discover and exploit.

AI-Powered Application Testing Transitions from Experimental to Production

Sages are deploying AI platforms that continuously test applications, finding vulnerabilities that matter and filtering noise. Traditional SAST/DAST generate high false positive rates developers ignore.

One initiative: "Replace legacy SAST/DAST scanning with an autonomous application security platform that provides continuous, human-level pentesting in CI/CD, reduces false positives, and accelerates remediation."

The False Positive Economics for 2026

High false positive rates from traditional tools train developers to ignore security findings, but AI-powered tools can reduce noise significantly by understanding code context. The strategic advantage is improved accuracy that builds developer trust. Accurate findings get fixed quickly rather than developers spending time triaging noise and building skepticism.

Run 60-90 day pilots in Q1-Q2 with specific criteria: false positive rates, CI/CD integration, time to value. Before enterprise commitments, conduct vendor financial diligence because market consolidation means some vendors won't survive. Organizations deploying mature AI testing by Q3-Q4 2026 find vulnerabilities faster with less developer friction, while teams on legacy SAST/DAST struggle with false positive backlogs that slow remediation.

API Security Requires Dedicated Program Ownership and Budget

Several initiatives positioned API security as separate from API gateway security. Network perimeter security becomes irrelevant when APIs are directly exposed to the internet.

One objective: "Identify and possibly implement an API Security tool that provides comprehensive protection across the API lifecycle. The solution should deliver real-time threat detection, enforce security policies, offer detailed analytics, and integrate with existing infrastructure."

The Attack Surface Reality for 2026

API security can't be a gateway feature or AppSec subset; it requires dedicated program ownership covering the full lifecycle because APIs became the primary attack surface as applications decomposed into microservices. Most API vulnerabilities are authorization logic flaws introduced during development that runtime tools can't fix.

Implement API security testing in CI/CD in Q1-Q2 to shift left, catching authorization issues before merge. Assign dedicated ownership with 20-30% role minimum documented in performance goals, because diffused responsibility means no one owns outcomes.

As architecture decomposes into microservices, attack surface shifts to APIs. Organizations recognizing this fastest and building dedicated programs gain defensive advantage while competitors treat API security as someone else's secondary responsibility.

Top Application Security Vendors Sages Evaluated in H2 2025

Based on all H2 2025 AppSec initiatives, Sages most frequently considered these platforms:

Vendor Consolidation: Market Transition Signals

H2 2025 initiatives document three critical vendor market shifts: incumbent platforms losing ground to alternatives with better economics and AI-native architectures, growing interest in consolidated security suites over point solutions, and persistent vendor gaps around explainable AI, sustainable pricing models, and realistic change management expectations.

Documented Splunk Displacement: Multiple Replacements Signal a Broader Market Shift

The numerous Splunk replacement initiatives captured in Sagetap's H2 2025 data are documented, budgeted replacement decisions with Q1 2026 deadlines and executive approval.

What makes this significant is that these teams are long-time Splunk customers explicitly stating they're migrating away, with one team noting "We have been a long time customer of Splunk but recognize it is no longer the right SIEM for how we operate." This is an established customer base actively replacing the incumbent.

The pattern across all initiatives: ingestion-based pricing models that penalize organizations for collecting security data, lack of AI-native capabilities in the core platform architecture, and Q1 2026 renewal deadlines creating forcing function for decision rather than status quo extension.

The initiatives didn't specify replacement vendors (they were actively conducting evaluations), but consistently mentioned seeking "AI-native data pipelines," "next-generation SIEM," and platforms with pricing models that "retain full detection fidelity while improving efficiency and cost-effectiveness." Sages’ common requirements included AI capabilities built into the platform from inception rather than bolted on, pricing that doesn't penalize log collection, and easier analyst experience.

The Market Indicator for 2026

These replacements are early indicators of broader SIEM transition where ingestion pricing becomes untenable as cloud generates exponentially growing log volumes. If pricing that made Splunk leader now drives exodus, every vendor using similar pricing faces the same pressure.

When evaluating, ask about customer growth rate; stagnant growth signals rejection despite strong share. Prioritize AI-native, cloud-first architectures for next five years versus on-prem legacy with bolt-ons for past five.

Are you evaluating vendors based on where they are today or where they'll be in three years when your contract expires?

Security Suites vs. Point Solutions: Make Your Consolidation Decision

Some Sages are evaluating bundled suites from Microsoft, Palo Alto, and CrowdStrike rather than best-of-breed point solutions. One initiative mentioned: "using existing partners - CrowdStrike (endpoints) Palo Alto (firewalls) and Microsoft E5 security tools."

Pure-play SIEM vendors without adjacent capabilities may face increasing competitive pressure as organizations evaluate suite-based approaches.

The Architectural Strategy Decision for 2026

Suite versus best-of-breed is a strategic choice about optimizing for operational simplicity versus flexibility. Neither is wrong, but trying both creates confusion. For Microsoft E5 customers, evaluate Sentinel seriously, as economics and integration often favor the Microsoft ecosystem.

Market trends suggest increasing interest in suite-based approaches, with many organizations evaluating bundled security platforms alongside best-of-breed solutions. Position deliberately on one side (commit to one or the other) because being stuck in the middle maintains both point solution complexity and platform lock-in without benefits of either.

Four Market Realities Many Vendors Won't Discuss Openly

Beyond product capabilities, H2 initiatives revealed persistent gaps between vendor marketing claims and operational realities that directly impact deployment success and long-term ROI.

AI washing problem: Every vendor claims "AI-powered" capabilities in marketing materials and product briefs. Sagetap's data shows teams asking the real differentiating question: "What happens when it gets it wrong?"

Real AI with genuine capabilities explains its reasoning process, provides confidence scores for decisions, enables human override for incorrect decisions, and shows training data provenance for audit trails. Marketing AI without substance deflects to "proprietary algorithms" without explanation, can't describe specific failure modes, and lacks audit trail capabilities.

Demand proof of AI decision reasoning with real examples from their platform. Request failure mode documentation. Vendors with genuine AI capabilities can explain their models. Vendors with marketing AI deflect to "proprietary" claims.

Ingestion-based pricing creates perverse incentives: Sages repeatedly mentioned "cost bloat" and "significant costs incurred based on ingest" when describing SIEM challenges in their initiatives. Ingestion-based pricing penalizes organizations for collecting security data; the more comprehensive your monitoring coverage, the higher your costs. This creates perverse incentive to reduce log collection to control costs, directly undermining security effectiveness and visibility.

Alternative pricing models emerging in the market include flat-rate pricing (unlimited ingestion within reasonable use), user-based pricing (per analyst seat), asset-based pricing (per monitored endpoint or workload), and hybrid models (base platform fee plus reasonable consumption tiers).

Sages evaluating alternative pricing models sought to reduce costs while maintaining full detection fidelity, noting that ingestion-based pricing creates pressure to collect less data despite security needs.

Vendor quotes exclude the largest cost drivers: Vendor pricing discussions often focus exclusively on license costs, but initiatives consistently referenced change management and analyst training needs that vendor quotes don't address, leaving the true implementation investment unclear.

Sales conversations rarely address analyst acceptance timelines, dual-platform operation risks, or the organizational change required for ROI realization. The gap isn't intentional — vendor sales processes evolved around product capabilities and pricing, not implementation complexity.

But the result is the same: organizations enter deployments with budget assumptions that don't account for the change management investment that determines success. Buyers need to proactively surface these costs during procurement, because standard vendor quotes won't reflect them.

AI doesn't solve talent problems alone: Multiple Sages’ initiatives mentioned seeking AI platforms "without expanding headcount." If you fundamentally can't hire SOC analysts due to market constraints, buying AI tools alone won't solve your SOC staffing problem comprehensively.

AI automates repetitive tasks that would require Tier 1 analysts you don't have, handles alert volume at scale humans physically can't match, and performs consistent analysis without fatigue. But AI doesn't replace the need for skilled senior analysts on complex investigations, eliminate requirements for SOC leadership and program management, remove necessity for threat hunting expertise, or substitute for incident response capabilities.

Deploy AI for Tier 1 triage automation, then redirect hiring budget from Tier 1 positions you can't fill toward Tier 2 and Tier 3 analyst roles where human expertise remains key.

Why Security Teams Are Outpacing Vendor Roadmaps

The data reveals a story traditional analyst reports miss because they often focus on vendor announcements rather than practitioner actions: security practitioners are ahead of vendors in AI deployment urgency and sophistication. Sages aren't waiting for vendor roadmap completion, market analyst consensus, or industry "best practices" to emerge. They're making decisive moves across Threat Detection & Response, Risk Management & Compliance, and Application Security.

- In Threat Detection & Response, teams migrated away from legacy SIEM platforms that couldn't scale economically with cloud architectures, deployed AI for SOC automation to fill positions they fundamentally can't hire for, and began experimenting with agentic AI that executes playbooks autonomously.

- In Risk Management & Compliance, boards demanded quantified breach costs in dollars rather than qualitative dashboards, driving rapid adoption of AI risk quantification platforms, while teams replaced TPRM vendors that "boxed them in" with rigid, non-customizable risk scoring.

- In Application Security, teams ran strategic pilots before enterprise commitments, validating AI-powered testing tools while waiting for inevitable vendor consolidation, and began addressing the systematic blind spot created by AI coding assistants introducing vulnerable dependencies traditional SCA tools miss entirely.

The common thread across all three categories: Sages are deploying AI because they're solving immediate operational crises that manual processes and legacy platforms simply cannot address. The talent shortage, board mandates, and AI-accelerated development cycles created forcing functions that made AI adoption essential.

Organizations that will win in 2026 make decisive vendor decisions before renewal deadlines create rushed evaluations, allocate significant budget to change management rather than treating implementations as purely technical work, demand explainable AI with documented reasoning beyond marketing claims, present cyber risk in quantified financial terms boards actually use for resource allocation, and run strategic pilots before enterprise commitments in fragmented markets.

Organizations that will struggle in 2026 wait for industry consensus before taking action when market transitions are happening now, buy AI tools without addressing root cause staffing problems and broken processes, continue presenting qualitative risk assessments boards have explicitly rejected, treat AI deployment as experimental proof-of-concept while competitors deploy at production scale, and commit to immature vendor platforms before market consolidation clarifies which will survive.

Table stakes reality check: By Q4 2026, AI capabilities in cybersecurity won't differentiate leading organizations from average organizations. AI will be table stakes (the minimum capability required to compete effectively). The strategic question is whether you'll lead the AI transition and gain early-mover advantages, or be forced into AI adoption by competitive pressure from organizations already operating more efficiently, persistent talent shortages that leave positions unfilled, and board mandates demanding capabilities you don't have.

Sagetap's H2 2025 data documents the early movers making this transition now across all three categories analyzed in this report. The Sage leaders documenting their initiatives, sharing evaluation outcomes, and holding vendors accountable are building the intelligence infrastructure that helps the entire security community make better decisions faster.

Are you leading this transition or following it? Sages are already deep into 2026 planning, documenting their next wave of initiatives on Sagetap, and sharing intelligence with each other about which vendors actually deliver on promises.

Join Sagetap to Access the Intelligence Behind Enterprise Security Decisions

Sagetap is an exclusive community where the industry's most experienced CISOs and security executives share intelligence about what actually works in security leadership. Our Sages document their buying decisions, vendor evaluations, and implementation outcomes in real time, creating an unmatched resource for fellow leaders navigating complex technology decisions.

Connect with verified CISOs and other security leaders who've already evaluated the tools you're considering. Learn from practitioners who've completed SIEM migrations, deployed AI SOC automation, or implemented risk quantification platforms.

Document your requirements and track evaluations in one place. Manage vendor assessments, score solutions against your criteria, and maintain complete evaluation recordings for stakeholder review.

Discover personalized recommendations based on your actual security initiatives. See which vendors peers with similar challenges selected and why they made those decisions.

Engage with complete anonymity until you're ready. Control every vendor interaction, maintain privacy throughout your evaluation, and connect with peers without pressure.

Monitor real outcomes at every evaluation stage. Track which implementations succeeded, which migrations faced challenges, and which vendors delivered on promises versus making marketing claims.

Shape the future of security technology. Your documented initiatives and vendor feedback help the entire community make better decisions while holding vendors accountable to practitioner needs.

Published February 23, 2026 by Sagetap

Human Intelligence. Smarter Technology Decisions.

Analysis based on 264 cybersecurity initiatives created on Sagetap platform from July 1 - December 31, 2025. This report focuses on the three security categories with highest AI adoption: Threat Detection & Response (125 initiatives, 40% AI), Risk Management & Compliance (92 initiatives, 36% AI), and Application Security (47 initiatives, 23% AI).

Methodology: This report analyzed documented security initiatives from verified CISOs and security executives on the Sagetap platform. All statistics are calculated from initiative data, and vendor intelligence comes from initiatives. Market predictions are labeled as Sagetap analysis and grounded in observed H2 2025 patterns.

Vendor Selection Methodology: Vendor lists throughout this report reflect the platforms Sages evaluated most often in documented H2 2025 Sagetap initiatives within each category. When Sages create initiatives on Sagetap, they identify vendors they're evaluating, from initial consideration through proof-of-concept and purchase decisions. Inclusion in this report indicates evaluation frequency among practitioners during H2 2025, not endorsement or comprehensive market coverage. Many excellent vendors may not appear due to category scope, evaluation timing, or the specific focus areas analyzed in this report.

Hear From Our Community

Tool and strategies modern teams need to help their companies grow.

Get Started

Join over 4,000+ startups already growing with Sagetap.

.svg)